(Actually a fork of RawTherapee)

ART is written by the Italian programmer Alberto Griggio, who aimed to keep the best of RawTherapee (RT) whilst making it easier to use. One of the new features is local masking.

I recently discovered ART when I wanted to apply 2 grad filters to an image. (RT) only allows one application of the effect. There is the obvious workaround of applying one grad filter, save the image, reopen the saved image in RT and apply a second grad filter.

I was hoping that ART would allow more than one application of a grad filter but it does not. However for my immediate requirement the masking feature of ART (Not present in RT) solves the problem.

Above is the image with just some basic global adjustments. What I now want to do is to apply a grad to darken the sky. But the sky is reflected in the water so that will require some darkening too, probably a reflection of the first grad filter.

Enter the Mask

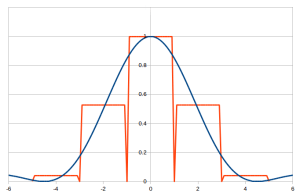

The required mask divides the image into 3 horizontal bands: sky, ground, and water by creating a blurred or feathered rectangular mask across the middle, i.e. the ground.

We would then expect any adjustment to affect the area that is not masked, but the author of ART seems to use the word in the opposite sense – no problem, just click the “invert” button. Whatever adjustment is then applied will affect the sky and the water in a similar way to 2 grad filters.

Whilst this is a very specific case of requiring 2 grads I am sure it is not uncommon; whenever a sky is reflected in a lake any adjustment to the sky must be reflected in an adjustment to the surface of the lake. Below is the result.